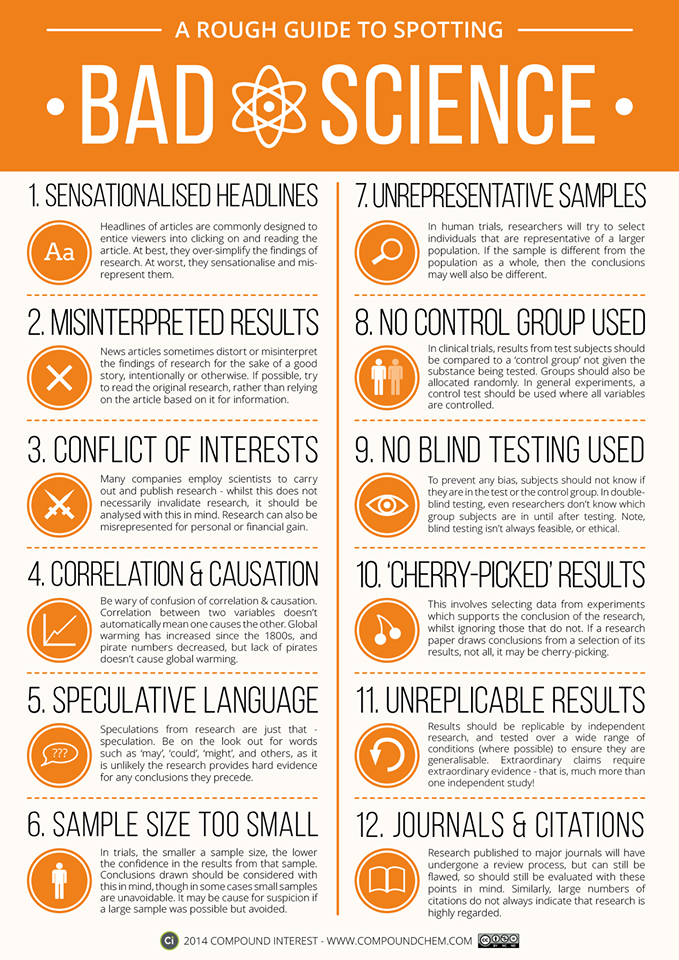

A Rough Guide to Spotting Bad Science

The vast majority of people will get their science news from online news site articles, and rarely delve into the research that the article is based on. Personally, I think it’s therefore important that people are capable of spotting bad scientific methods, or realising when articles are being economical with the conclusions drawn from research, and that’s what this graphic aims to do. Note that this is not a comprehensive overview, nor is it implied that the presence of one of the points noted automatically means that the research should be disregarded. This is merely intended to provide a rough guide to things to be alert to when either reading science articles or evaluating research.